Introduction

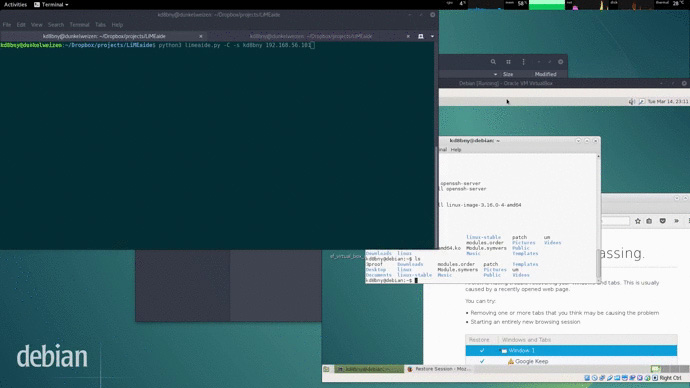

LiMEaide is a python application, developed by Daryl Bennett, that can remotely dump RAM of a Linux client and create a Volatility profile for later analysis on your local host.

LiMEaide: Remotely Dump RAM of a Linux Client

To use LiMEaide, you just need to simply feed a remote Linux client IP address. That’s it.

Working process

- Make a remote connection with specified client over SHH

- Transfer necessary build files to the remote machine

- Build the memory scrapping Loadable Kernel Module (LKM) LiME

- LKM will dump RAM

- Transfer RAM dump and RAM maps back to host

- Build a Volatility profile

Dependencies

python3: LiMEaide is written inpython3, therefore it requires it.paramiko: python library for instantiating a SSH connection with a remote host. Install usingpip3dwarfdump: required for volatility profile building, reads the debugging symbols in compiled LKM.LiME: the most important dependency (doing dumping).

Installing Dependencies

Python:

- DEB base

sudo apt-get install python3-paramiko python3-termcolor

- RPM base

sudo yum install python3-paramiko python3-termcolor

- pip3

sudo pip3 install paramiko termcolor

dwarfdump:

- DEB package manager

sudo apt-get install dwarfdump

- RPM package manager

sudo yum install libdwarf-tools

LiME:

LiMEaide will automatically download and place LiME in the correct directory, but you can also perform a manual installation, just follow the steps bellow:

- Download;

- Move the source into the

LiMEaide/toolsdirectory; - Rename folder to

LiME;

LiMEaide/tools/LiME

Usage

To run the LiMEaide:

python3 limeaide.py <IP>

Options:

limeaide.py [OPTIONS] REMOTE_IP

-h, --help

Shows the help dialog

-u, --user : <user>

Execute memory grab as sudo user. This is useful when root privileges are not granted.

-p, --profile : <distro> <kernel version> <arch>

Skip the profiler by providing the distribution, kernel version, and architecture of the remote client.

-N, --no-profiler

Do NOT run profiler and force the creation of a new module/profile for the client.

-C, --dont-compress

Do not compress memory file. By default memory is compressed on host. If you experience issues, toggle this flag. In my tests I see a ~60% reduction in file size

--delay-pickup

Execute a job to create a RAM dump on target system that you will retrieve later. The stored job

is located in the scheduled_jobs/ dir that ends in .dat

-P, --pickup <path to job file .dat>

Pick up a job you previously ran with the --delayed-pickup switch.

The file that follows this switch is located in the scheduled_jobs/ directory

and ends in .dat

-o, --output : <name>

Change name of output file. Default is dump.bin

-c, --case : <case num>

Append case number to front of output directory.

--force-clean

If previous attempt failed then clean up client

Limitations

- Only supports

bash. - Modules must be built on remote client.

![BIND Compile and Setup with DNSTap [v9.1x] BIND Compile and Setup with DNSTap [v9.1x]](https://cdn.cyberpunk.rs/wp-content/uploads/2019/04/bind_bg-500x275.jpg)